P-Hacking

It turns out that science has a bug! If you test many hypotheses but only report the one with the lowest p-value you are more likely to get a spurious result (one resulting from chance, not a real pattern).

Recall p-values: A p-value was meant to represent the probability of a spurious result. It is the chance of seeing a difference in means (or in whichever statistic you are measuring) at least as large as the one observed in the dataset if the two populations were actually identical. A p-value < 0.05 is considered "statistically significant". In class we compared sample means of two populations and calculated p-values. What if we had 5 populations and searched for pairs with a significant p-value? This is called p-hacking!

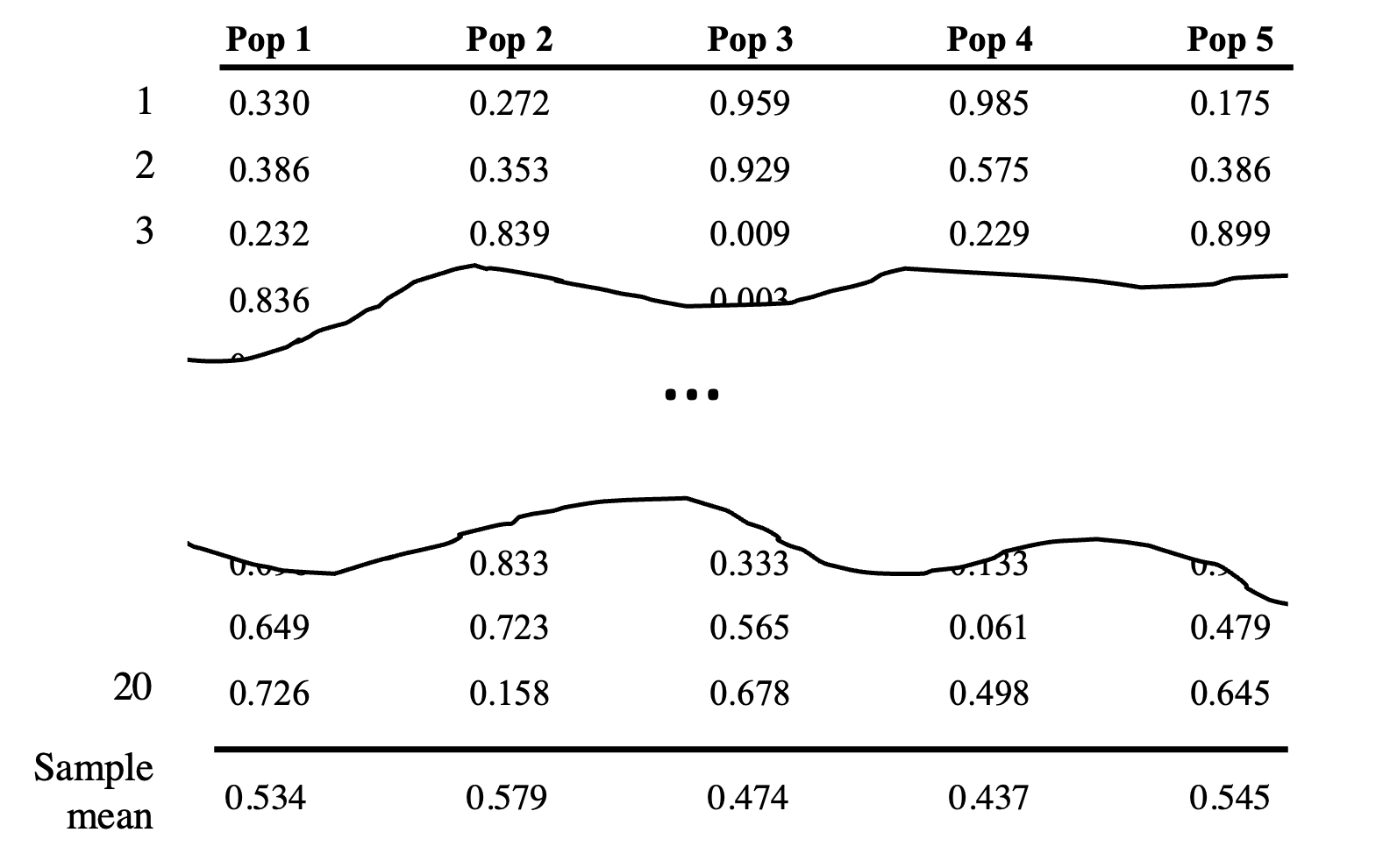

To explore this idea, we are going to look for patterns in a dataset which is totally random – every value is Uniform(0,1) and independent of every other value. There is clearly no significance in any difference in means in this toy dataset. However, we might find a result which looks statistically significant just by chance. Here is an example of a simulated dataset with 5 random populations, each of which has 20 samples:

The numbers in the table above are just for demonstration purposes. You should not base your answer off of them. We call each population a random population to emphasize that there is no pattern.

There are Many comparisons

How many ways can you choose a pair of two populations from a set of five to compare? The values of elements within the population do not matter nor does the order of the pair.

Understanding the mean of IID Uniforms

What is the variance of a Uniform(0, 1)?

What is an approximation for the distribution of the mean of 20 samples from Uniform(0,1)?

What is an approximation for the distribution of the mean from one population minus the mean from another population? Note: this value may be negative if the first population has a smaller mean than the second.

$X_1\sim N(\mu = 0.5 , \sigma^2 = \frac{1}{240})$

$X_2\sim N(\mu = 0.5 , \sigma^2 = \frac{1}{240})$ The expectation is simple to calculate because $$E[X_1 - X_2] = E[X_1] - E[X_2] = 0$$ \begin{align*} \Var(X_1 - X_2) &= \Var(X_1) + \Var(X_2) \\ &= \frac{1}{120} \end{align*} The sum (or difference) of independent normals is still normal: \fbox{$Y \sim N(\mu = 0, \sigma^2 = \frac{v}{10})$}

# the smallest difference in means that would look statistically significant k = calculate_k() # create a matrix with n_rows by n_cols elements, each of which is Uni(0, 1) matrix = random_matrix(n_rows, n_cols) # from the matrix, return the column (as a list) which has the smallest mean min_mean_col = get_min_mean_col(matrix) # from the matrix, return the row (as a list) which has the largest mean max_mean_col = get_max_mean_col(matrix) # calculate the p-value between two lists using bootstrapping (like in pset5) p_value = bootstrap(list1, list2)Write pseudocode:

n_significant = 0

k = calculate_k()

for i in range(N_TRIALS):

dataset = random_matrix(20, 5)

col_max = get_max_mean_col(dataset)

col_min = get_min_mean_col(dataset)}

diff = np.mean(col_max) - np.mean(col_min)}

if diff >= k:

n_significant += 1}

print(n_significant / N_TRIALS)